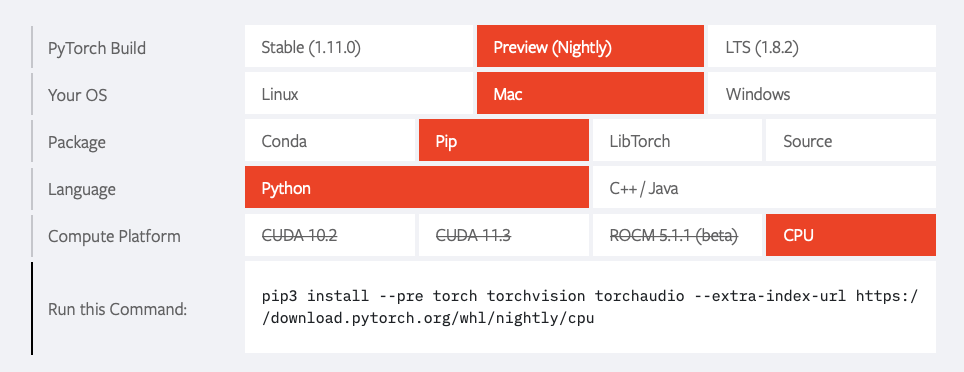

Installing PyTorch 1.5 for CPU on Windows 10 with Anaconda 2020.02 for Python 3.7 | James D. McCaffrey

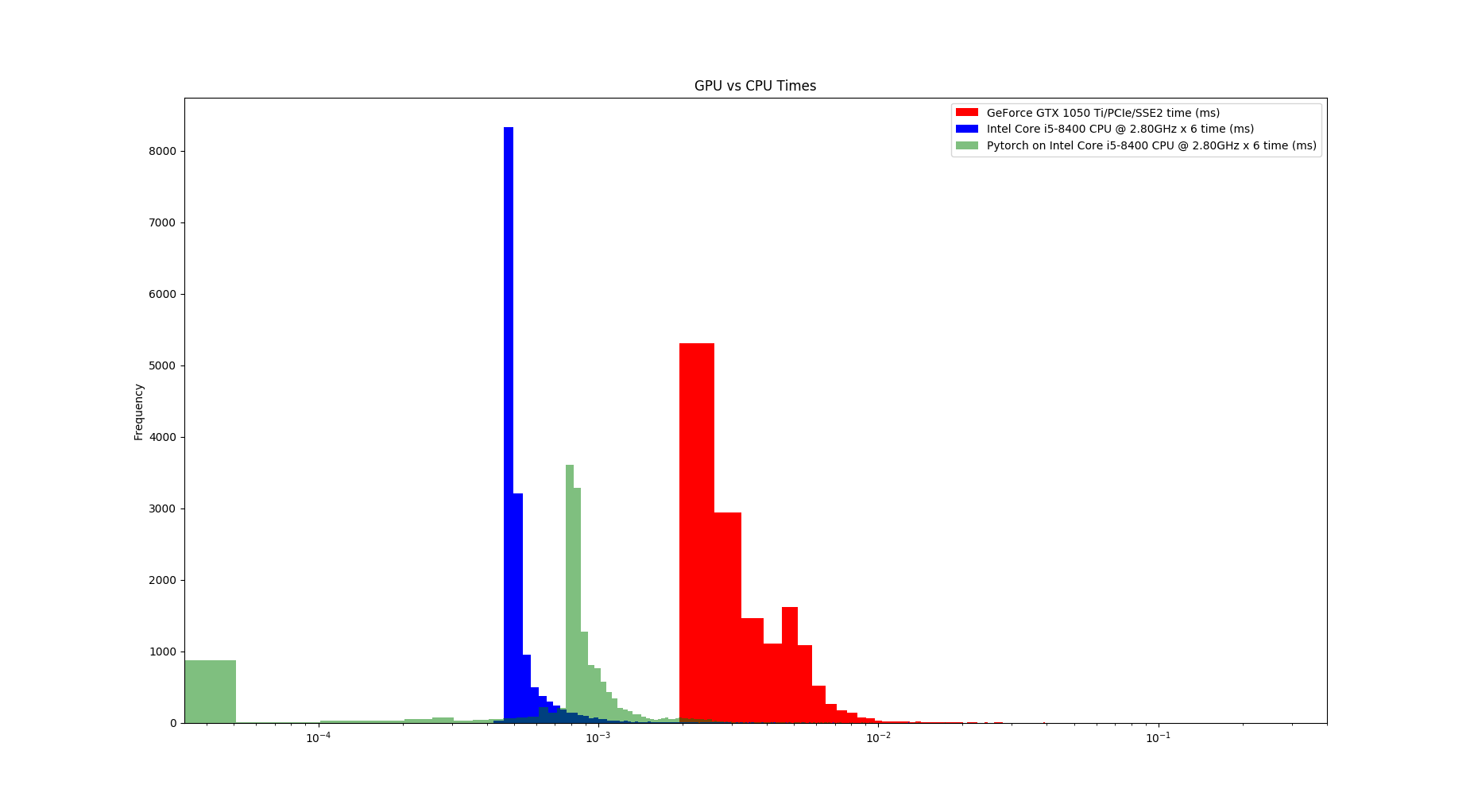

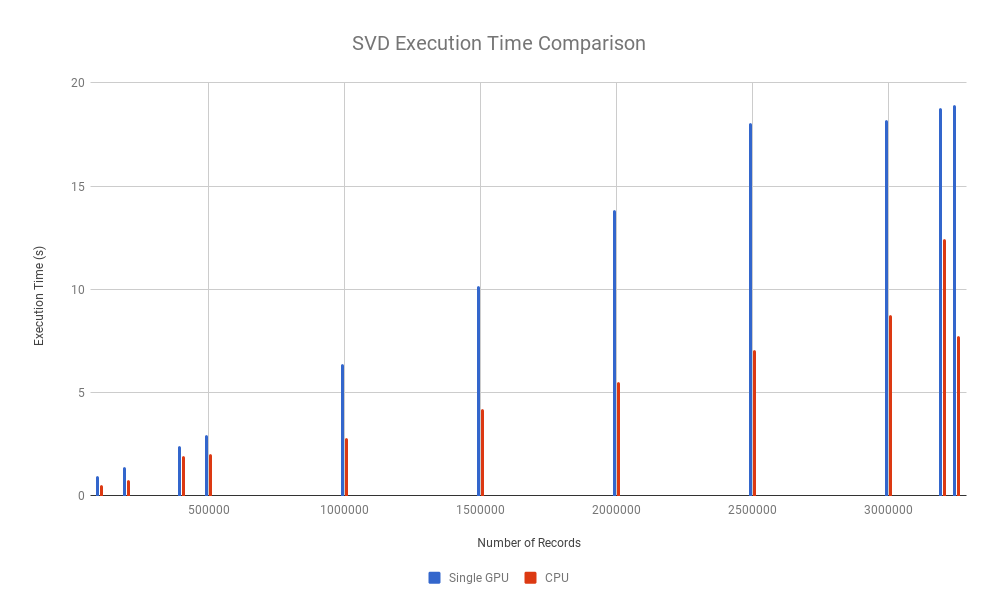

Comparison between CPU performance of PZnet, Tensorflow and PyTorch... | Download Scientific Diagram

Can´t install Pytorch on PyCharm: No matching distribution found for torch ==1.7.0+cpu - Stack Overflow

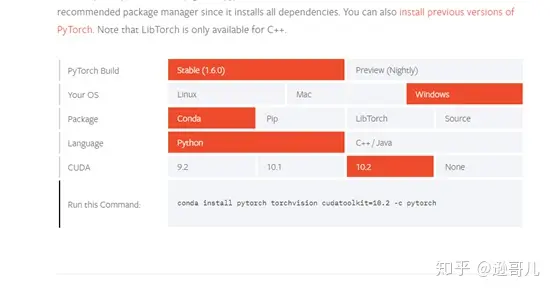

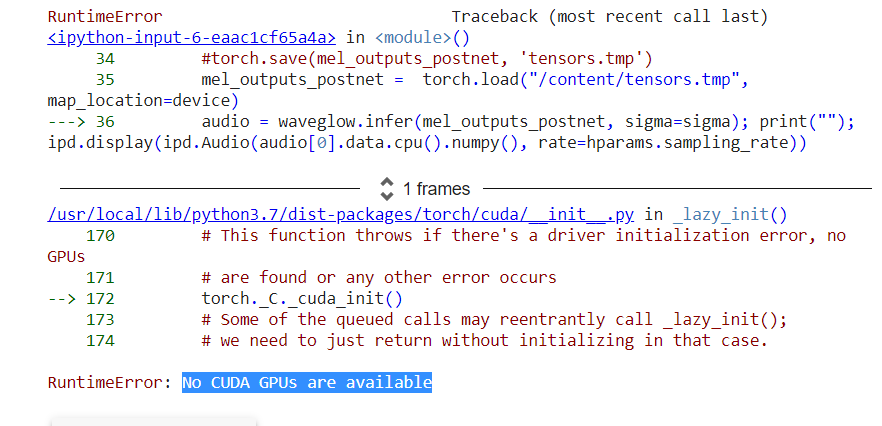

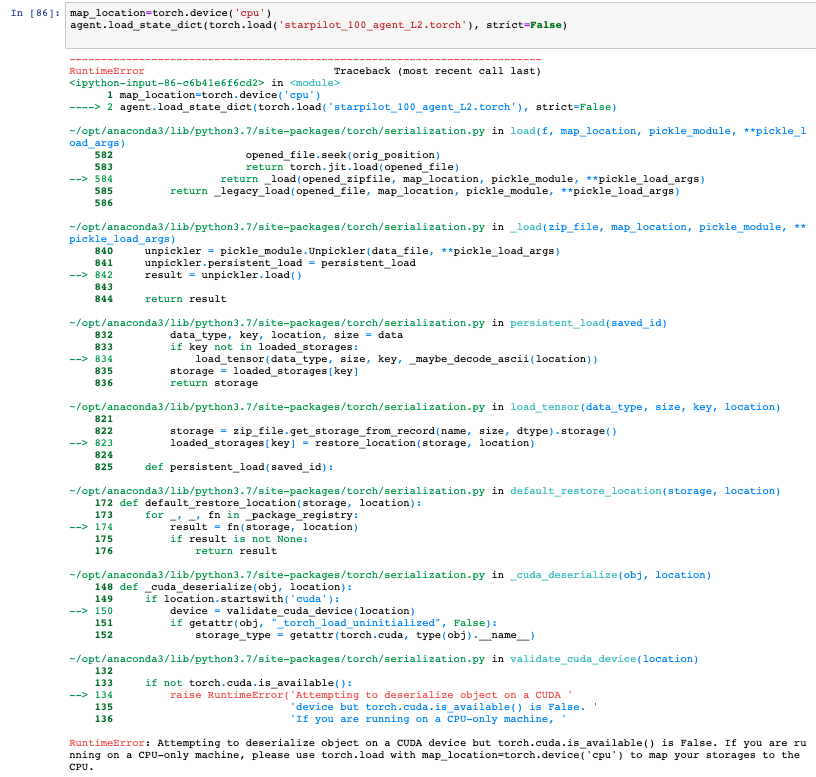

Pytorch:GPU版代码改成CPU版(RuntimeError: torch.cuda.FloatTensor is not enabled.)_没有独显怎么改代码为cpu_weixin_39450145的博客-CSDN博客

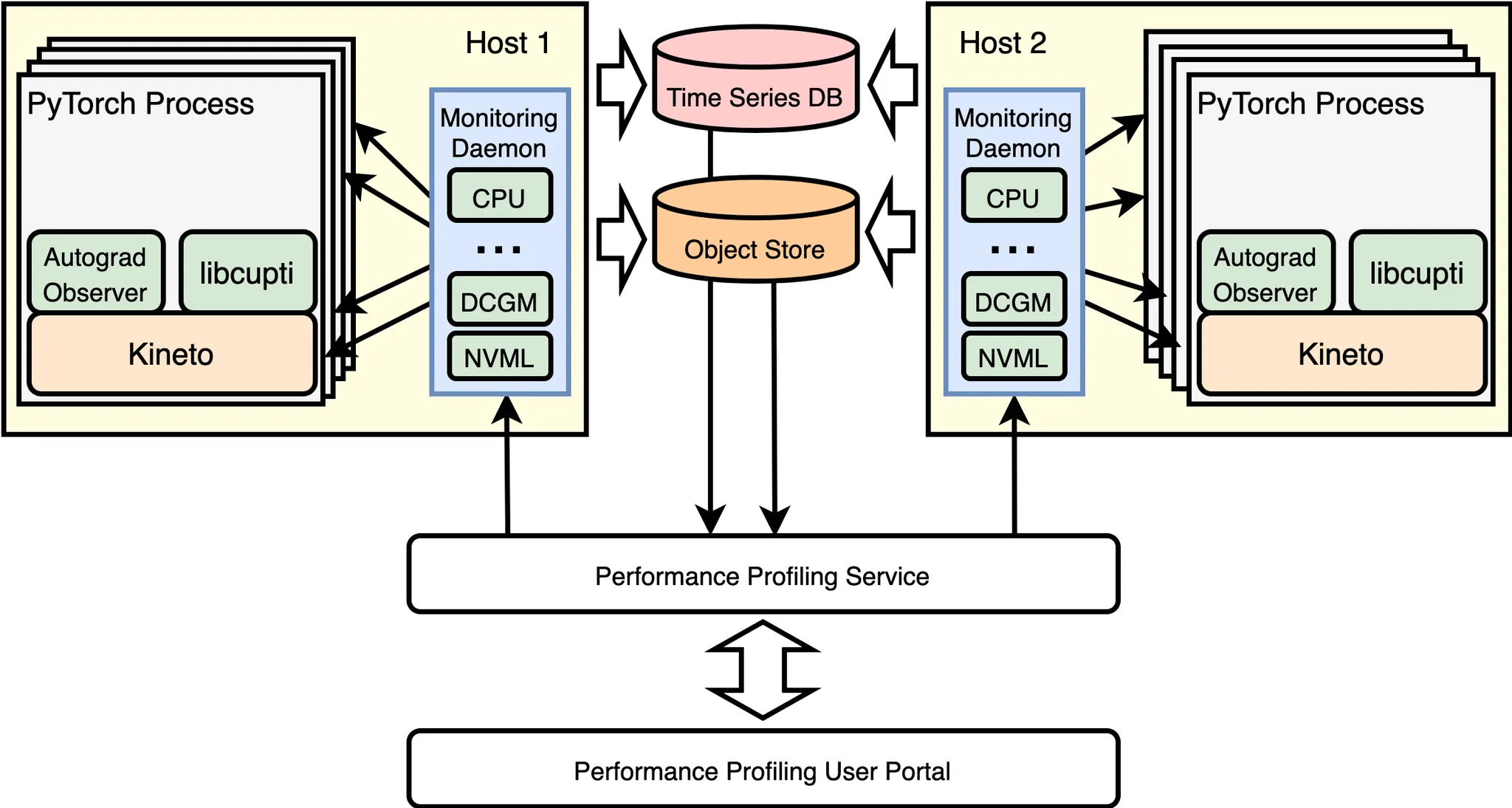

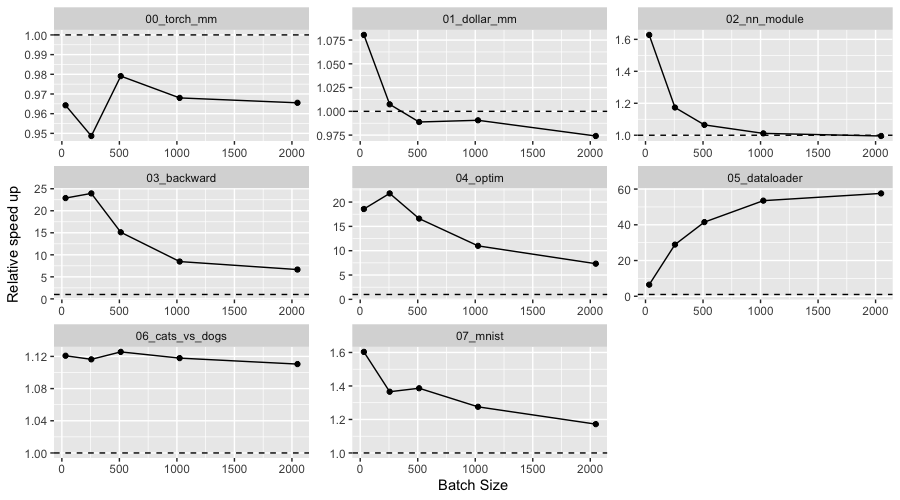

Grokking PyTorch Intel CPU performance from first principles — PyTorch Tutorials 2.0.0+cu117 documentation

CPU version of torchvision requires non-CPU version of torch · Issue #5393 · pytorch/vision · GitHub